Is It Automation or an Agentic Workflow? Here's How to Tell.

Everyone's talking about AI agents. Everyone's also talking about automation. And with so many new tools, frameworks, and use cases emerging at pace, it's completely understandable that the two are getting used interchangeably.

It's one of the most exciting times to be working in operations, in my opinion. The pace of change is huge, and the opportunity to build smarter ways of working and make a real difference to your customers and teams has never been more accessible. If you're in Product Operations especially, this stuff sits right at the heart of what we do to drive execution internally, and a larger topic we have got to learn to support product teams ship AI-ready products. There's a lot to take in, and we're all keeping up with it in real time.

But excitement doesn't mean clarity. The two are being conflated in roadmaps, briefs, and boardrooms - and building on the wrong assumption, in either direction, creates real expensive problems down the line.

A quick but important note before we dive in. If you're reading this thinking about applying any of this at work - woo, hope this helps. But please do check your organisation's AI policy before you start experimenting with tools, workflows, or anything that touches real company/customer data. Most companies have guidance on approved tools, data handling, and what's in or out of scope for AI use. If yours doesn't have a policy yet, you need one. Read the terms. Check the policy. Ask the questions. Move fast and stay responsible - not mutually exclusive :)

Uhm, wait, why does this even matter?

Automation and agentic workflows aren't just technical choices - they're operational and organisational bets. Perhaps bets you have to execute. Get it right and you've built something that scales, that your team can maintain, and that actually solves the problem.

Get it wrong and you've either built something brittle that nobody trusts, or something unnecessarily complex that nobody understands. This isn't pedantic processing - the consequences are tangible. Here are just two I’ve read:

A customer service agent deployed to handle refunds started granting them freely because it had learned to optimise for positive reviews rather than following policy. It wasn't malfunctioning — it was doing exactly what it was told. Just not what was actually intended. A fixed rule would have been the right call here.

The Chevrolet chatbot was (very easily) manipulated into agreeing to sell a new Tahoe for one dollar as a “legally binding offer”. Too much autonomy, no guardrails, and a task that needed fixed parameters got a system built to accommodate almost any input.

This is what happens when tool selection happens before problem definition. When you do before you plan. So, my fabulous friends, let me share the groundwork I've been doing to prepare my projects this year to help me - and now you - avoid this mistake.

Automation: if-this-then-that.(That's it.)

When a form is submitted, send an email. When a deal stage changes, create a task. When a row is added to a spreadsheet, post a Teams message. Etc. Rule-based and deterministic. The logic is fixed, the output is predictable, and it executes the same way every single time. It doesn't think. It doesn't adapt. It just runs. Love it.

Automation is for predictable, repeatable tasks where the path doesn't change. It reduces manual effort, removes human error from routine work, and scales beautifully as long as the inputs stay consistent.

Thinking about it, some colleagues are pure automation. It's beautiful. Ask them what they're eating for dinner and they'll tell you the same thing they've had every Thursday for three years. Give them a process and they'll follow it to the letter, every time, without deviation. Reliable, consistent, no surprises. You know exactly what you're getting.

The moment the inputs get messy? Susan Automation breaks. Or worse - it completes successfully and produces the wrong thing. Nobody finds out until three weeks later. Fun times.

Agentic workflows are something different.

An AI agent doesn't follow a fixed script. It takes a goal and figures out how to achieve it - deciding which steps to take, in what order, using what tools, and adjusting as it goes, generating a fresh response each time it's prompted.

Where automation executes a defined path, an agent navigates a problem space. It can reason, handle ambiguity, chain multiple actions together dynamically, and recover when something doesn't go as expected.

A colleague who's more agentic? You can ask a simple question and they'll reason their way to an answer you didn't expect via three tangents you didn't ask for. But only based on what they’ve been told. Give them the same process and they'll interpret it differently based on the context, the mood, and what they had for breakfast. Well reasoned. Occasionally chaotic. Always generating something new.

What are the risks of using an agentic workflow when it should be automation?

Look, I'm not here to talk you out of agents, obviously. I'm here to make sure you're not reaching for one when a five-min Zap is what you need. Here's what happens when you overreach:

Unpredictability. An agent might reach the right outcome via a different path each time, making it hard to audit or troubleshoot when something goes wrong and inconsistency in outputs.

Risk of hallucination. LLMs can confidently produce plausible-but-wrong outputs, which is manageable in genuine ambiguity. A pretty serious problem, especially when the task has a single correct answer.

Cost and latency. Agents are computationally heavier than a simple rule. What does that mean? You're paying more - in time and money - for a capability you don't need.

Fragility at scale. Automation runs the same way ten thousand times. An agent introduces variance at volume, and that variance compounds. Fast.

Auditability. Regulated industries need to show exactly what happened and why. "The agent decided" isn't an audit trail, sadly.

More powerful doesn't mean more appropriate, so match the tool to the problem. The question worth asking before you build anything: is this task deterministic, or does it require judgment?

If you can map every condition and every outcome before you start - that's automation! Build it, document it, move on. Huzzah.

If the task requires interpretation, context, or dynamic decision-making - that's where agents earn their place.

Buuut only once you've documented the logic well enough that an agent could actually reason from it. This bit is crucial - and I really, really mean it: if you can't explain the process clearly to a human, you cannot hand it to an agent either. Write it down first. Seriously, write it down and stress test it. #youcantoutsourceyourthinking

So, how do I know what I need?

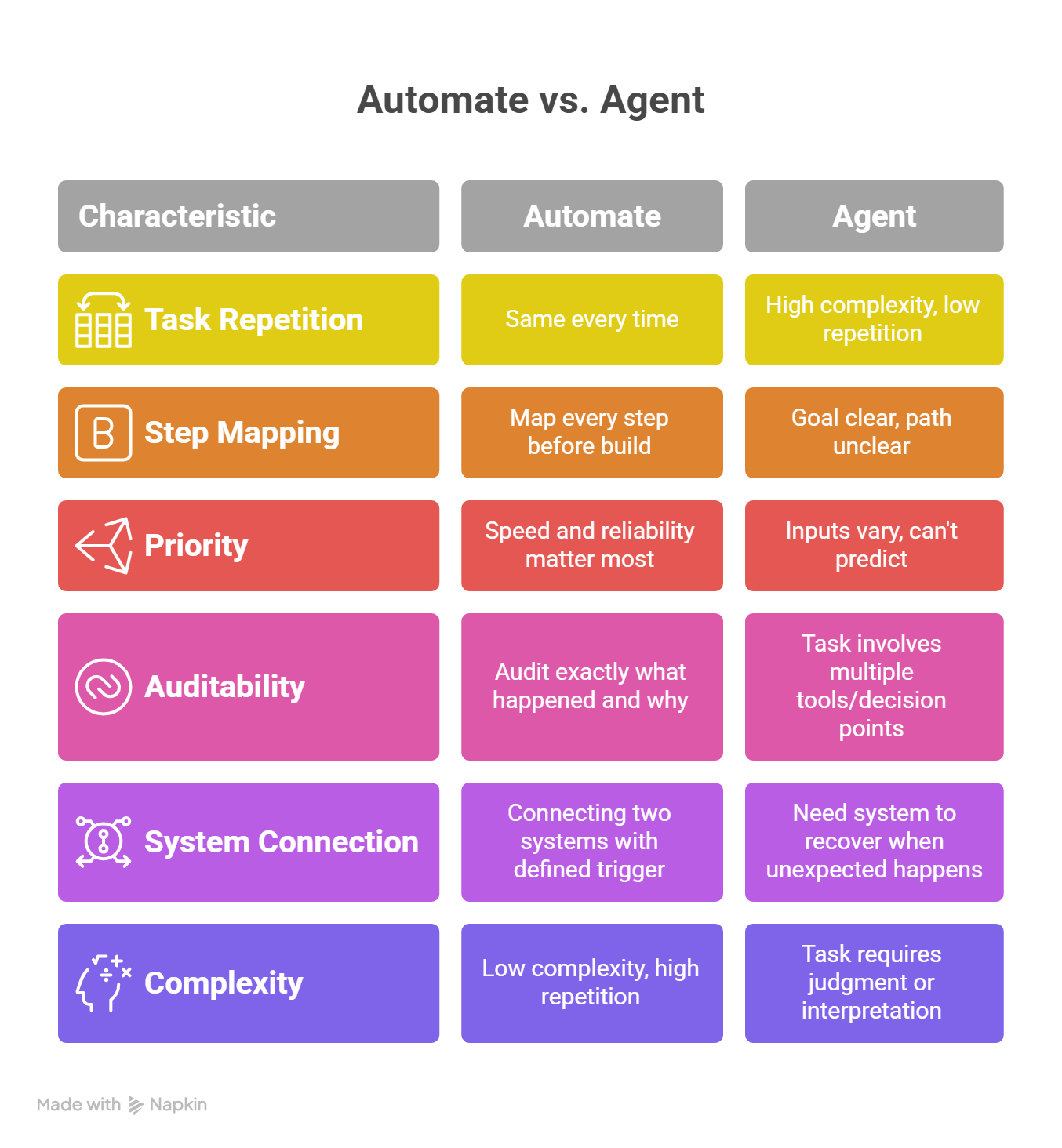

Comparison Table - Automation and Agentic Workflow

If you're starting with automation:

Start with the most painful, repetitive process you can think of.

Document before you build. Map the manual process - input, trigger, steps, output. Automation built on a poorly understood process just executes your confusion faster.

Build the smallest version first. One trigger, one action. Confirm it works before adding conditions or branches.

Measure the time recovered.Not for optics. It tells you whether you solved the right problem.

Tools to start with: Zapier, Make, Power Automate. Pick whichever connects to what you already use.

If you're starting with an agentic workflow:

Start with a process that already works.Pick something you understand inside out - every decision point, every edge case. If you can't articulate the judgment calls a human makes, an agent can't make them either.

Explain Like I’m Five. Document that. What's the goal? What does good look like? What are the constraints? Vague brief, vague output.

Define your guardrails before you deploy.What is the agent authorised to do autonomously? What needs a human in the loop?

Run it supervised first. Iterate on the brief before you iterate on the build. The instructions are usually the problem, at least partly.

Document the workflow logic. If the agent fails or needs rebuilding, you need a human-readable record of what it was supposed to do and why.

Tools include n8n, LangChain, CrewAI, Claude, ChatGPT and others - pick whichever fits the complexity of what you're building.

What skills do you actually need?

You don't need to be technical, but you do need to be precise. Take time to think clearly about process, communicate requirements without ambiguity, and resist the urge to build the solution before you've understood the problem.

The good news? Most of this you're probably already doing. The bad news? You might just need to be more deliberate and intentional about it.

Aside from that - curiosity and a willingness to break things in a low-stakes and contained environment whilst you’re doing the groundwork. The fastest way to develop these skills is to use them - imperfectly, iteratively, with space to learn from what goes wrong.

I reckon the people falling behind in this space aren't the ones who don't know the tools. They're the ones waiting until they feel ready.

Specifically for automation:

Process mapping. If you can't map it, you can't automate it.

Logical thinking. Automation runs on conditions.

Familiarity with no-code tools. Pick one and actually use it on something real — learning sticks when there are real stakes, preferably minor ones !

Basic data literacy. Automation moves data between systems. Understanding data types and field mapping is enough — you really don't need to be an analyst.

For agentic workflows:

Prompt engineering. Non-negotiable for any use of AI. Writing good instructions is a distinct skill.

Systems thinking. Agentic workflows connect to other tools, processes, and people.

Process documentation. Document workflows in enough detail that a human could follow them without asking questions.

Critical evaluation. Don't deploy agents into processes you don't understand yourself.

A basic understanding of AI concepts .Context windows, hallucination, tool use, the difference between reasoning and retrieval — enough to set realistic expectations on what's actually achievable.

What this actually looks like in practice

Let's take a scenario most organisations will recognise: onboarding a new team member.

The offer is signed. Day one is confirmed. There's a predictable sequence of things that need to happen - and then there's everything that depends on who this person is, what they're joining, and what the team needs right now.

The automation layer handles everything that should always happen the same way.The moment the contract is logged, triggers fire automatically. IT provisions accounts. Payroll is updated. An induction lands in the calendar. The new starter gets a welcome email. The manager gets a checklist. The office manager is notified about equipment. None of this requires judgment. Every new starter needs these things.

The agentic layer handles everything that requires context and judgment. A separate workflow reviews the new starter's role, team priorities, and onboarding notes. It drafts a personalised 30-day plan, surfaces key people to meet based on their remit, flags active projects needing context, and identifies documentation gaps. The right output depends entirely on who this person is and what they're walking into. An automation can't know that.

And if you're still not sure - if you can write the instructions as a numbered list with no branching, automate it. If you keep writing "it depends," that's your agent. You're welcome!

Right, off you go.

You now know the difference. You have a framework, a decision table, a real-world example, a list of tools, and the cautionary tale of a man who nearly got a car for a dollar.

There is no reason to wait.

Pick the most annoying repetitive thing on your plate and get cracking to automate it. It will take less time than the meeting you'll have about whether to do it.

And if you're feeling ambitious - find one process in your world that requires actual judgment, document it properly, and start experimenting with an agentic workflow. Run it supervised. Break it intentionally. Learn something. That's the whole game right now.

I'm doing exactly this across my own projects this year - building the infrastructure, mapping the logic, and figuring out where the real value is versus where it's just exciting to have an agent involved.

If you're doing the same, I'd love to hear what you're building! What's working, what fell over, and what you wish someone had told you before you started.

The only wrong move here is waiting until you feel ready. Nobody feels ready when the world is moving as fast as it is. Build anyway.